Note: I’m writing about this technique to (1) reduce the overhead cost of testing it, and (2) illustrate what I consider good practices for “rolling out” a new technique to be added to a rationality curriculum. Despite seeming super-useful in my first-person perspective, experience says the technique itself probably needs to undergo several tests and revisions before it will actually work as intended, even for most readers of my blog I suspect.

Recently, I found myself getting stuck on a certain question about my romantic life. Let’s pretend, for the sake of specificity, that the question was: should I date people who are more “chill” and have lots of time to spend with me, or more “driven” (which I also like) but harder to schedule time with?. (A false dichotomy, I know, but one must simplify to communicate at all.)

I began by applying my full repertoire of CFAR techniques — mental movements with names like “aversion factoring”, “Murphyjitsu”, and “Gendlin focusing” that I’ve found extremely helpful, some of which I actually helped develop and teach.

But it didn’t work. I was stuck. It had been years since I’d been stuck on figuring out something like this — a testament to how much I’d gotten from working at CFAR — and I’d almost forgotten what it felt like. It was annoying! But maybe I’d picked most of the low-hanging fruit from my life with my current techniques, and whatever remained would necessarily be less tractable. Maybe I’d just have to spend 3 or 4 years dating a variety of people to figure out what driven/chill balance I wanted empirically. Maybe I should just accept that this is a hard problem?

So I decided to dispel my dissatisfaction by writing down a thorough, proof-like argument that I could not in fact easily resolve my dilemma. (I’d recently had luck with a similar method for ruling out approaches in my research.) The argument was a case analysis, because I had to show that none of my problem-solving techniques were likely to work.

A few minutes into the argument, it hit me. “Aha!” You know those “aha” moments, right? They’re not always a big deal, but there’s something qualitatively different about a little “aha!” versus the feeling you get from just following the next step in a pre-planned chain of reasoning. There’s a feeling of creativity, or a sudden changing of gears that feels immediately promising rather than just a matter of course.

“Aha! One of the premises in my proof is just wrong.”

(What I realized specifically was something like this: I wasn’t really stuck choosing between “chill” and “driven” in my partners, but actually, my premise for how I’d like my partner to relate to me being driven was confused. I hadn’t understood yet how I wanted her to feel about that aspect of me, so dating felt sort of aimless. I didn’t know what I was shooting for. I promptly spent 30 minutes figuring that out, and all of a sudden wanted to go on a lot more dates. Yay!)

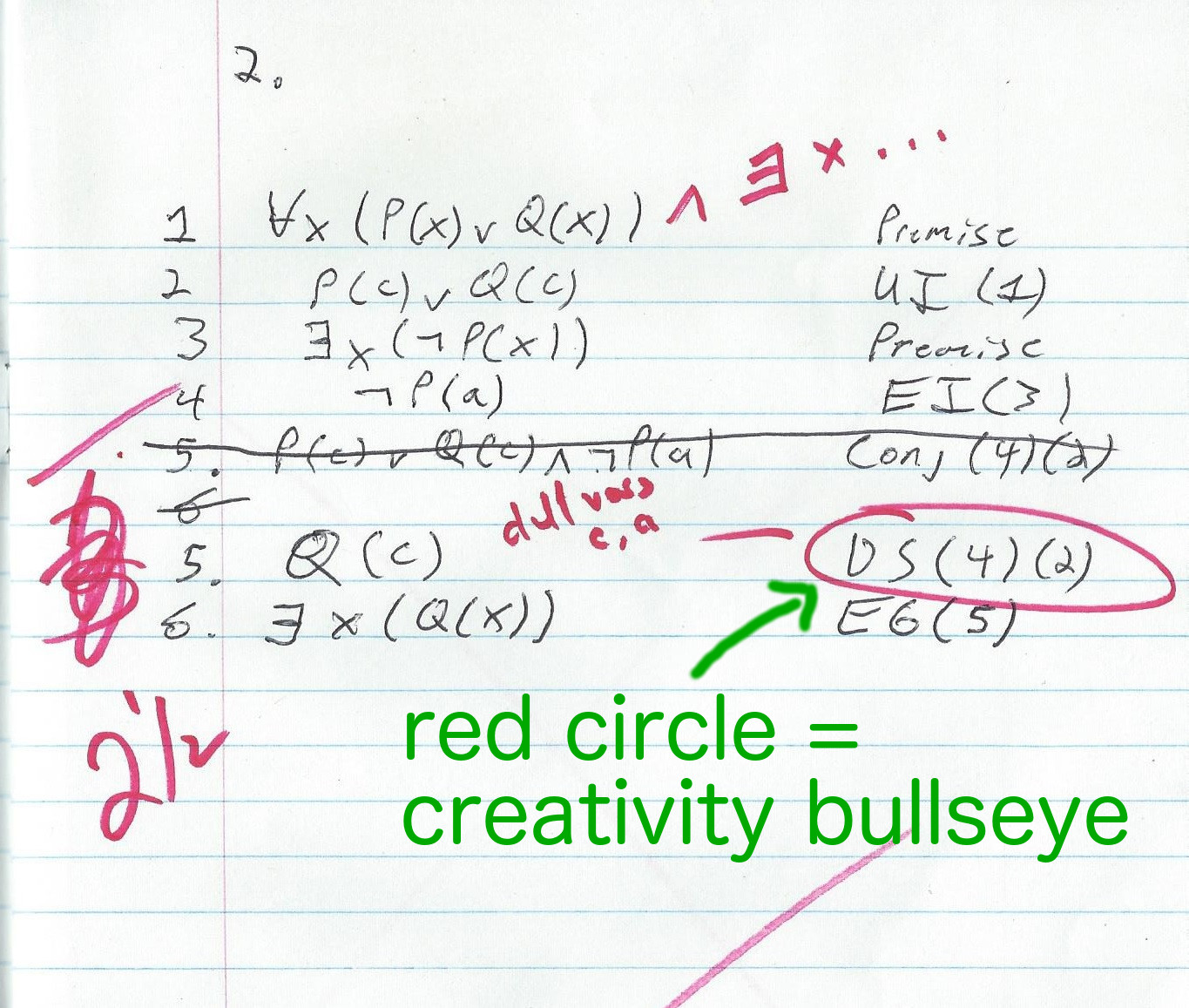

Since then, I’ve been trying more often to resolve tricky problems by failing-to-prove that they’re impossible, and turning that point of failure into a creative attack. It’s been really productive, both inside and outside my research. It’s like… if I flesh out the difficulty of a problem in sufficient detail as to make an argument about its difficulty, and if that argument is itself sufficiently rigorous that my inner Red Pen wants to come out and circle the parts of it that aren’t well-justified, then I can quickly orient my creativity to attack the weakest point of the argument and produce a positive result.

For this reason, in my inner idiolect I alternate between calling this technique “proof-failing” and “red penning”. I’m not sure how well it will work for others, especially if you don’t have much experience writing — or even better, grading — mathematical proofs. There might be a few pre-requisite skills needed here, like sensing when it’s time to stop proving X and start proving ¬X instead, or noticing what link in a chain of formal arguments is the least rigorous / most suspect.

So in my spare time I’ve begun experimenting with transferring the technique — which surely has some nuances I’ve yet failed to notice or articulate — to a few mathy people first. So far they’ve found it useful, but we’ll see if the trend continues and generalizes.

Now, one problem with assessing the marginal value of a technique like this is that sometimes *any* structured method for solving a problem is an improvement over ad-hoc approaches. So, to control for that somewhat, I’ve started with folks who are not only mathy, but who are also already familiar with the CFAR Core curriculum. And, when testing this new technique, we choose problems that they’ve found resistant to their old techniques. That way, I can also judge better whether Proof Failure / Red Penning is really marginally useful, i.e., worth appending to CFAR’s existing curriculum.

If my prime candidates find the technique useful, I’ll next try teaching it to some folks who know the CFAR curriculum but have less math background, so I can adjust it and learn, as I often do, that a less math-y framing can work just as well but for a wider audience, given a little iteration to help find that framing. Finally, if that works, I’ll look at adjusting it for and teaching it to some folks who don’t know the CFAR curriculum at all. If they also find it useful, maybe CFAR Core will want to add it to their curriculum, which is meant to form more of a base than a tower.

Hopefully this blog post will help me try it out with a few more people with lower overhead-cost, and also illustrate what I consider good practices for assessing whether a new technique should be added to an applied rationality curriculum (rather than merely replacing another old technique, which is also a good thing to do, but requires a different kind of assessment).

Stay tuned!

Here’s a possible way to describe this technique (or a closely related one) to anyone who can think and write clearly, not using any math/proofs/CFAR concepts:

Try writing a letter to a sympathetic person (real or imaginary) who you trust, but who knows nothing of your specific circumstance, which explains your dilemma in detail, and explains why it’s a problem — why the obvious solutions won’t work. Try systematically listing all possible solutions and explaining exactly why they won’t work.

While you do this, or when you read it afterwards, does some part of it seem too vague, or dubious, or wrong?